Key Features of ndCurveMaster

ndCurveMaster automatically creates multiple alternative nonlinear equations from data — quickly, smartly, and without coding.

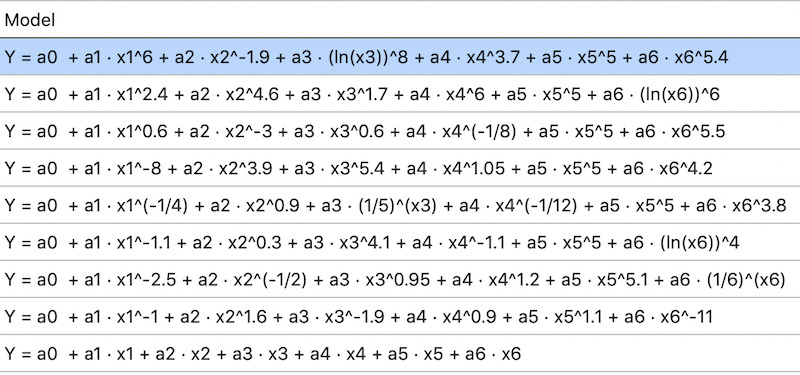

Models can include just a few predictors or advanced variable combinations, for example:

- Simple nonlinear model:

Y = a0 + a1 · exp(x1)-0.5 + a2 · (ln(x2))8 + a3 · exp(x3)1.5 + a4 · x44.1 + a5 · x55 + a6 · x66 - Model with variable combinations:

Y = a0 + a1 · exp(x1)-0.1 + a2 · x2 + a3 · x3 + a4 · x4(1/8) + a5 · x10.9 · x20.9 + a6 · x11.35 · x3 + a7 · (ln(x1))5 · ln(x4) + a8 · x2 · x3 + a9 · (ln(x3))8 · x40.55 + a10 · x11.25 · x2 · x3 + a11 · x2(1/2) · x36 · x40.8 - High-order polynomial model:

Y = a0 + a1 · x1 + a2 · x2 + a3 · x3 + a4 · x1 · x2 + a5 · x1 · x3 + a6 · x2 · x3 + a7 · x1 · x2 · x3 + a8 · x12 + a9 · x22 + a10 · x32 + a11 · x13 + a12 · x23 + a13 · x33 + a14 · x12 · x2 + a15 · x1 · x22 + a16 · x12 · x3 + a17 · x1 · x32 + a18 · x2 · x32 + a19 · x22 · x3 + a20 · x12 · x2 · x3 + a21 · x1 · x22 · x3 + a22 · x1 · x2 · x32

After model generation, ndCurveMaster evaluates their quality using:

- Fit statistics: R Square, Adjusted R Square, Pearson’s R, Concordance Correlation Coefficient (CCC), Standard Error, RRMSE, RMSE for training and test sets

- Variance analysis: ANOVA (F-statistic)

- Parameter significance: t-statistics and confidence intervals

- Residual normality assessment: Q–Q plots and statistical tests (Shapiro–Wilk, Anderson–Darling)

Learn more about residual normality - Variable sensitivity analysis: Applied when residuals deviate from normality

Learn more about sensitivity analysis - Multicollinearity detection: Variance Inflation Factor (VIF) and Pearson correlation matrix

Learn more about multicollinearity - Overfitting detection: Cross-validation–based assessment

Learn more about overfitting detection - Agreement analysis: Bland–Altman test for assessing agreement between predicted and observed values

- Heteroscedasticity diagnostics: Visual inspection using residual plots

- Information criteria: Akaike Information Criterion (AIC) for objective model ranking by balancing goodness of fit and model complexity

Model search is powered by heuristic, randomized, and Monte Carlo–based optimization methods,

which iteratively optimize the selection and transformation of base functions.

The Monte Carlo strategy enables effective exploration of the search space and helps avoid convergence to local minima,

leading to more robust and physically meaningful model structures.

Learn more about model search algorithms

During the regression search, ndCurveMaster allows you to actively optimize model discovery by applying user-defined quality constraints:

- Control multicollinearity: Set a maximum acceptable Variance Inflation Factor (VIF) threshold.

- Limit overfitting: Restrict model complexity and training–test error divergence at a user-defined tolerance level.

- Enforce statistical significance: Retain only models in which all predictors meet predefined F-test and t-test significance criteria.

- Avoid proportional bias: Exclude models exhibiting proportional bias based on the Bland–Altman test.

- Constrain regression coefficients: Specify minimum acceptable values for regression coefficients.

- Lock selected predictors: Fix chosen variables to remain unchanged during the search.

- Accelerate discovery: Speed up the search by restricting it to fundamental functions, then perform a full search if needed.

ndCurveMaster uses up to 380 built-in base functions (power, exponential, logarithmic, trigonometric), organized in 5 collections, plus your own custom formulas.

Full Summary of Features

- Fully automated search and fitting of nonlinear equations to data using regression methods

- Model evaluation using R Square, Adjusted R Square, Pearson correlation coefficient, Concordance Correlation Coefficient (CCC), RMSE, RRMSE, Standard Error, and Akaike Information Criterion (AIC)

- Normality testing of residuals (Shapiro-Wilk, Anderson-Darling) and Q-Q plot

- Bland–Altman analysis to assess model reliability

- Variable sensitivity analysis for non-normal residuals

- Detection and prevention of overfitting using cross-validation

- Multicollinearity detection via VIF and Pearson Correlation Matrix

- Polynomial regression of any order and combination of variables

- Support for unlimited number of variables and complex combinations

- Heuristic and full random search algorithms for better model discovery

- Multi-threaded processing for faster computation

- Support for weighted data

- History and ranking of models

- Option to manually expand or reduce models

- Import data from CSV, TXT, XLSX, XLS

- Access to 380+ built-in functions plus user-defined functions

- Generation of models in C/C++, Pascal and export to Python

- Copy, save, or reload results easily

- High-quality 2D plots: fitted lines, residuals, histograms

- Set your own alpha significance level

- Optimized to handle large datasets

Examples & Tutorials

See how the features listed above are applied in real data-driven modelling workflows. The tutorials below show practical examples of nonlinear regression, curve fitting, model validation, and equation discovery using ndCurveMaster.

- Nonlinear regression workflow – build, validate, and optimise models step by step

- Bland–Altman analysis – remove proportional bias and assess model reliability

- Multicollinearity elimination – detect and handle correlated variables using VIF

- Curve fitting – move from simple polynomial models to accurate nonlinear equations

- 4D modelling – fit functions with multiple input variables

- Equation discovery – identify physical relationships directly from data

- Differential equations – estimate constants of general solutions from measurements